TIB at WikidataCon: Part 2

This is the second installment of a 2-part blog post covering the latest edition of WikidataCon, October 29–31st, 2021. Learn more about the conference and its general themes, as well as recent updates to the vision and strategy of Linked Open Data development within the Wikimedia ecosystem in the first part of the blog.

Focus on TIB’s conference contribution

OSL team members participated in 3 presentations on Sunday, October 31st, in the context of the Wikibase and Education and Science tracks. Learn more about each presentation below:

Wikibase as RDM infrastructure within NFDI4Culture

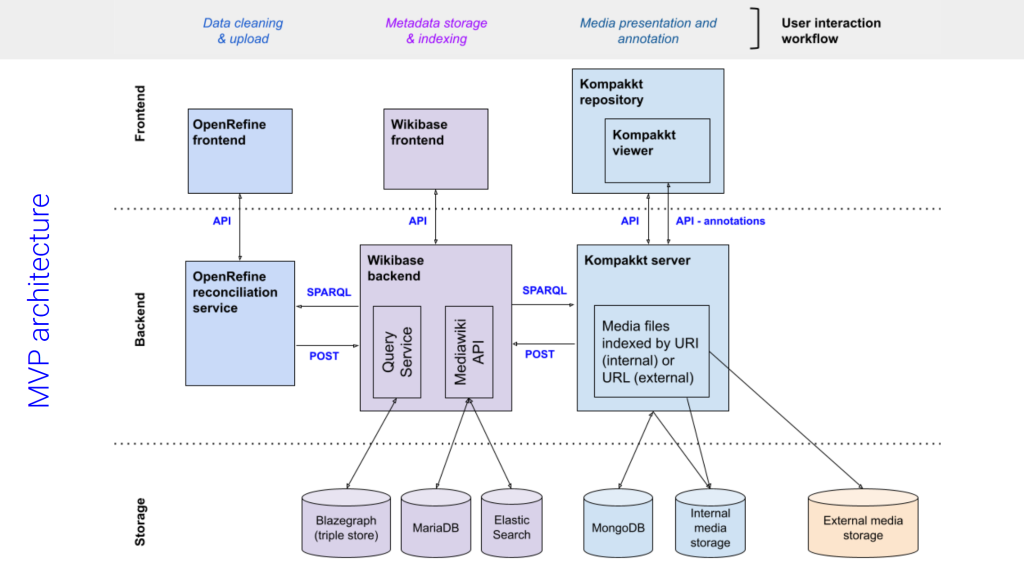

[Wikibase track] [Video recording]In the first half of this session, OSL’s Ina Blümel and Lucia Sohmen discussed the new minimal-viable-product (MVP) toolchain that we are developing in the context of NFDI4Culture’s Task Area 1 “Data capture and enrichment”. The MVP architecture relies on Wikibase to store and structure contextual metadata and user-contributed annotations for 3D models and reconstructions of cultural assets.

With a view towards sustainability, the MVP development aligns with the overall strategy of the Wikimedia Movement to support a decentralized ecosystem of federated Wikibase instances wherein data from Wikidata (and other data sources) is re-used and re-contextualized for specialist domains (e.g. cultural heritage). It further contributes to needs identified by various communities for additional, domain-specific extensions, tools, and user interfaces around the Wikibase software. In Phase 1 of development, we designed an accessible data upload pipeline which streamlines the metadata upload process (via the open source software OpenRefine, see below). What is more, we developed a custom branch of the open source software Kompakkt to serve as an extended frontend to the Wikibase repository. With Kompakkt, users can upload, view and annotate a range of files and formats of 2D, 3D, and audio-visual media in a modern, user-friendly, web-based interface. The next development phase will introduce the possibility to leverage the Wikibase API and SPARQL endpoint for bulk annotations as well. In the future, the MVP will be open to any project that wants to store, visualize and annotate complex visual data. [See presentation slides here.]

The second part of the session focused on the potential benefits, as well as challenges for using Wikibase in the context of “The 4Culture Knowledge Graph” (part of Task Area 5 which TIB also contributes to with staff and infrastructure resources). The presentation was delivered by Harald Sack from FIZ Karlsruhe, and provided insights as to the need for formal semantics to be an integral part of the 4Culture Knowledge Graph, and not just an add-on. Wikibase and its MediaWiki GUI increase user accessibility to LOD and offer opportunities for collaboration and community engagement, which are important incentives for broader adoption within the NFDI consortium. At the same time, the lack of native semantics and W3C standard vocabularies (RDF, RDFS, OWL) in Wikibase, negatively impacts interoperability, data reuse and federation outside the ‘bubble’ of the Wikidata/Wikibase ecosystem. The presentation offered several mitigation strategies for addressing the issue of formal semantics that are currently being tested and evaluated at FIZ Karlsruhe; these included: declarative semantic mapping, data import/export (via the triple store), and development of a dedicated semantic extension. The results of evaluating the workaround tactics will be published as Guidelines and Best Practices to enable the NFDI4Culture community to share their data resources within a federated Knowledge Graph via Wikibase instances. [Download presentation slides here.]

Using OpenRefine with arbitrary Wikibase instances

[Wikibase track] [Video recording]Building on from the presentation of the 3D annotation MVP toolchain, Lozana Rossenova and Lucia Sohmen delivered a lightning talk which expanded on the data pipeline developed for the MVP. The talk focused on the role of OpenRefine in the data pipeline. OpenRefine allows users to clean data, transform it and reconcile against other open data sources, like Wikidata. It also makes it possible to directly upload to, as well as pull data from Wikidata. Recently, this functionality was extended to make it possible to connect to any Wikibase instance. However, this requires additional server-side and frontend configurations. Much of this is not yet fully documented, so with this presentation we aimed to provide a succinct overview of all necessary steps in the process. We also presented a service box, developed at TIB, that automates the server-side setup.

We demoed the steps users need to perform in the frontend and tested uploading sample data to a Wikibase instance. Given that the version of OpenRefine that allows Wikibase connection is still a beta pre-release, we did encounter some bugs during the live demonstration. Fortunately, OpenRefine’s lead developer Antonin Delpeuch was also in the audience and took note of it. We plan to work closely with the OpenRefine team to help with their documentation and bug testing efforts since OpenRefine is an essential part of our data upload pipeline. And in the spirit of “it takes a village to raise a tool” (see Part 1 of this blog post), we want to support a tool that plays a vital role across many community projects within the Wikimedia Movement at large, as well as the 4Culture community more narrowly. [See presentation slides here.]

Integration of Wikidata 4OpenGLAM into data and information science curricula

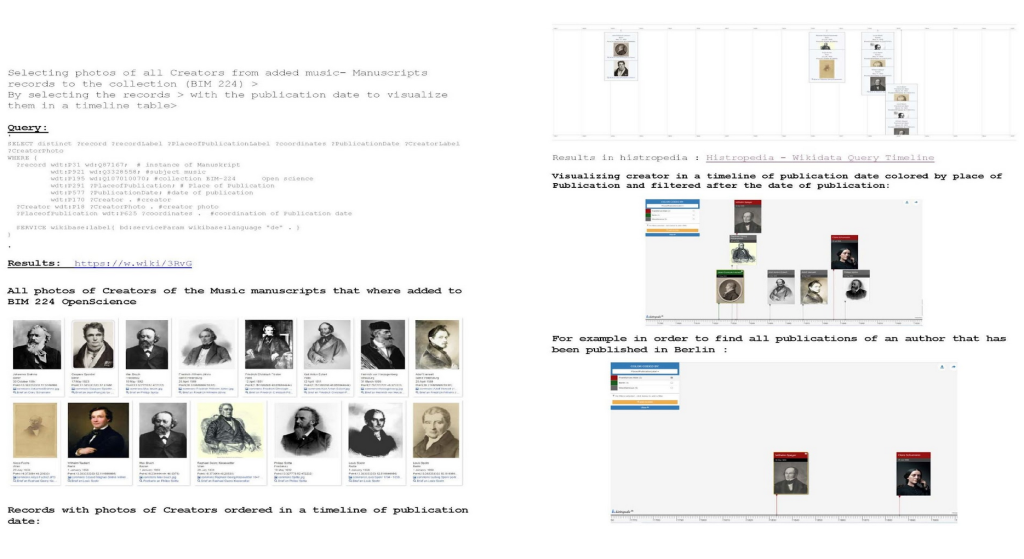

[Education and Science track] [Video recording]It is not new that Wikidata and OpenRefine are used in academia, as they are good tools for teaching data science skills. There are many examples of this and a lot of material that can be used in teaching and for self-study. In this presentation, Ina Blümel showcased several new online resources which were developed last semester as part of a project on linking and visualising cultural heritage data using Wikidata and two Data Science courses at Hannover University of Applied Sciences and Arts for and with students of information science.

We focussed on the description and discussion of how to integrate student work and the projects of the Open Science Lab (9 projects in total, out of which 6 are in OpenGLAM, and 4 use Wikidata and/or Wikibase) and on how to motivate students to engage with more advanced tasks in the field of cultural heritage. Lucia Sohmen presented tasks she designed for one of the courses to teach students different ways of interacting with open data. These included download via an API (OAI-PMH) and by scraping IIIF manifests using a Python library; cleaning and transforming data followed by uploading it to Wikidata – all through OpenRefine; and querying and visualizing their data by using Wikidata’s SPARQL interface. [See presentation slides here.]

Wikidata & Education: A Global Panel

[Education track] [Video recording]

Outlook

The next event where many of these topics will be presented is the Culture Community Plenary. If you want to stay up to date, you can follow Open Science Lab on Twitter and sign up for the NFDI4Culture newsletter.

Dr. Lozana Rossenova ist Mitarbeiterin im Open Science Lab der TIB und arbeitet im Projekt NFDI4Culture in den Bereichen Datenanreicherung und Entwicklung von Wissensgraphen. // Dr Lozana Rossenova is currently based at the Open Science Lab at TIB, and works on the NFDI4Culture project, in the task areas for data enrichment and knowledge graph development.

... ist stellvertretende Leitung des Open Science Lab der TIB und hat eine Professur an der Hochschule Hannover für Vernetzte Daten in der Informationswissenschaft.

0 Antworten auf “TIB at WikidataCon: Part 2”